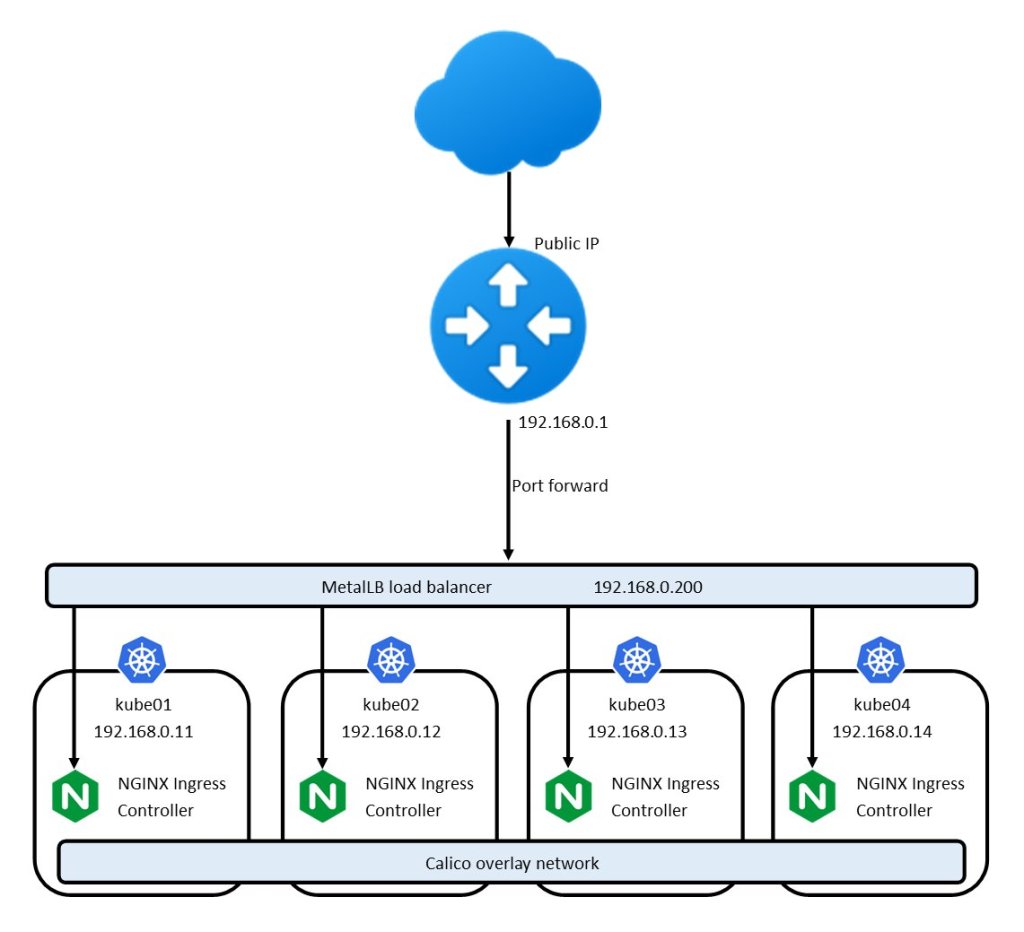

Welcome to part 6 of my Kubernetes Homelab series. In the previous posts we’ve discussed the architecture of the hardware, networking, Kubernetes cluster, and infrastructure services. Today we’re going to look at deployment strategies for applications on Kubernetes.

My goal for my homelab is not necessarily 100% automation, but I would like the ability to deploy applications with a consistent config, redeploy them if necessary, manage upgrades, have versioned config, handle secrets safely, and to reduce the scope for human error.

Full CD or GitOps tools like Argo CD, Flux CD and others are a bit of a heavy hammer for this homelab environment, so let’s have a look at what it’s possible to achieve in a very lightweight solution.

Helm

Helm brands itself as a package manager for Kubernetes. Personally, I think calling it a package manager is a bit of a stretch as it lacks key functionality that we’ve seen with other package managers such as yum, pip and other distro and language-specific package managers.

What Helm can do is install & upgrade an application with a config file (which it calls a values file), which we can keep in git.

So I have created a private git repo called kubernetes-manifests (in hindsight I could’ve chosen something shorter), which contains a directory for each app I want to deploy. That directory contains a README to explain what the app is, a values file, and a deployment script.

aboutme/

├── deploy.sh

├── README.md

└── values.yaml

Every Helm chart ships with a values.yaml file that contains all possible values (i.e. config options) so I usually copy that file into my repo and edit it for my use case, removing redundant options to keep the file short.

The deployment script just wraps a Helm command so I don’t forget which args to pass next time I want to deploy.

Let’s have a look at a real example – my About Me page I use as a biosite, to pull some links together. It deploys an app called Homer.

#!/bin/sh

helm upgrade -i --create-namespace \

-n about about \

-f values.yaml \

djjudas21/homer

My values.yaml is derived from the upstream values.yaml, with my config added – which is just a yaml structure listing all the links that appear on the live site.

Using helm upgrade -i instead of helm install just tells Helm to perform an upgrade, or do an installation if there is no existing deployment. I can safely run the deploy.sh script at any time and it will install the app if it needs to, upgrade the installation if necessary, configure it with new values if there are any, otherwise do nothing.

This satisfies my requirements of being able to make repeatable deployments, redeploy apps in the case of cluster loss, upgrade them at will, and keep my config in version control.

Secrets

The above example of my About Me app is a simple one because no secrets are required to deploy. What if I needed to provide the app with credentials, API keys or other secrets? I wouldn’t want to store those in git.

I use Helm Secrets with age to be able to store secrets encrypted in a git repo. I won’t go into the full procedure for setting it up because that is documented, but let’s have a look at how it works in practice.

For this example, let’s look at a photo sharing app called PhotoPrism. The default values.yaml requires you to set the root password. It’s mixed in with a bunch of other config values:

# -- environment variables. See docs for more details.

env:

# -- Set the container timezone

TZ: UTC

# -- Photoprism storage path

PHOTOPRISM_STORAGE_PATH: /photoprism/storage

# -- Photoprism originals path

PHOTOPRISM_ORIGINALS_PATH: /photoprism/originals

# -- Initial admin password. **BE SURE TO CHANGE THIS!**

PHOTOPRISM_ADMIN_PASSWORD: "please-change"

It’s possible to encrypt the entire values.yaml but I prefer to encrypt only the secret values, so it’s still easy to read the non-secrets. Helm lets you supply multiple values files, so let’s split out the secrets into a separate file called secrets.yaml, leaving the publicly readable values in values.yaml. The env hash will be merged by Helm upon deployment.

env:

# -- Initial admin password. **BE SURE TO CHANGE THIS!**

PHOTOPRISM_ADMIN_PASSWORD: "please-change"

# -- environment variables. See docs for more details.

env:

# -- Set the container timezone

TZ: UTC

# -- Photoprism storage path

PHOTOPRISM_STORAGE_PATH: /photoprism/storage

# -- Photoprism originals path

PHOTOPRISM_ORIGINALS_PATH: /photoprism/originals

Now we can encrypt secrets.yaml without affecting the readability of values.yaml.

$ helm secrets enc secrets.yaml

Encrypting secrets.yaml

Encrypted secrets.yaml

The contents of the file are encrypted and safe to check into git:

env:

#ENC[AES256_GCM,data:fhKUc7wQ1aRoUI2QWnu37hiIG84R5gAOvMr8mvGXRz7DpmxCgf7VRxtJtfOtjfA6gvgp33ocfg==,iv:Ldvmh5KSN1++g0VHRY01S5PQXQf51No7SXkahtSMi14=,tag:NM5wiY9Bl0VwrxFTAsm+Gw==,type:comment]

PHOTOPRISM_ADMIN_PASSWORD: ENC[AES256_GCM,data:7Tt9mKkZ+U7zAtskQw==,iv:37AJkEmUk8VaA3wSaH5jPc2VwIB/hXCxM/FFxa9fPTc=,tag:S4hts6NO6zDA+DGsttIdoQ==,type:str]

sops:

kms: []

gcp_kms: []

azure_kv: []

hc_vault: []

age:

- recipient: age1xeguyqecm3zx2talea7jfawpgzfymula3f9e7cyr76czeh3qdqhs6ap9sp

enc: |

-----BEGIN AGE ENCRYPTED FILE-----

YWdlLWVuY3J5cHRpb24ub3JnL3YxCi0+IFgyNTUxOSBSQXpnczVlUWUrUGN3NkZM

TndmVkdnOEY5UlJWMkFDWm1IL2tuUFozdndNCnQyYjVTVC9DKy9IV0s0SkdRbnFC

bWNwRjBsNC9aNzcrenQ0MG1weWk5SGMKLS0tIGFLRlMrNmtEMVRJd1h5cU83QnI5

TGdraWVHdjk2djZpUmN3bW05V2lsaUEKCKlvHPTDmr6sDCkqderSk0f+3w7x87pZ

V5XZxx5cblsq9tYTutA2tnxxuLFloY/jFen2wdsvHSrxhmCwjdsJ9Q==

-----END AGE ENCRYPTED FILE-----

lastmodified: "2023-04-18T14:25:13Z"

mac: ENC[AES256_GCM,data:F3pV6Ly+eP5ZfMTerWxfrgOny/CK6O2M3bhAQLM6+SxpmK2Ya+9rDYskcKSLaq8w7WVWJ/XAz3plg2Gx8gCAYJ+2SMgo6TVsENO8tu/xMRSgmbr6NViOlHTvNc/EcYl5NOj420r8TmF31B5OArvH4BSfoTijphKppnv/546hUco=,iv:2uwIeUXUVZigJR0j0FH2gYt4KlXAx9OMHh0yx52NqMw=,tag:ET16bfdlua7E8c0n9tGC3Q==,type:str]

pgp: []

unencrypted_suffix: _unencrypted

version: 3.7.3

We can easily view or edit this file with helm secrets view secrets.yaml or helm secrets edit secrets.yaml.

The last piece of the puzzle is tweak the deploy script deploy.sh to be able to decrypt our secrets on the fly. We do this by changing helm upgrade -i to helm secrets upgrade -i and specifying two values files with -f. Values files on the right override ones on the left. In this case, both values files specify an env key but the values are merged.

#!/bin/sh

helm secrets upgrade -i --create-namespace \

-n photoprism photoprism \

-f values.yaml -f secrets.yaml \

djjudas21/photoprism

Keeping up to date

We have the facility to upgrade a deployed app by first running

helm repo update

to update our Helm charts, then simply

./deploy.sh

to run the Helm upgrade from our deploy script.

As this is just my homelab, I don’t mind running upgrades manually, and I don’t need a fully automated solution. But it would be nice to know that there are updates available for my charts, without having to go checking manually.

There is a tool called Nova which can do exactly this.

$ nova find --format table --show-old

Release Name Installed Latest Old Deprecated

============ ========= ====== === ==========

about 8.1.5 8.1.6 true false

oauth2-proxy 6.8.0 6.10.1 true false

graphite-exporter 0.1.5 0.1.6 true false

node-problem-detector 2.3.3 2.3.4 true false

prometheus-stack 45.8.0 45.10.1 true false

rook-ceph v1.11.2 1.11.4 true false

rook-ceph-cluster v1.11.3 1.11.4 true false

This output lists the outdated Helm deployments on my cluster (in the current Kubernetes context). Nova doesn’t use local Helm chart repositories – it checks ArtifactHub as an index of Helm charts so any charts you want to check must be published there.

To update your Helm deployments, don’t forget to freshen your local Helm repositories so you have the latest charts:

helm repo update

./deploy.sh

At the moment, running Nova is a manual step that I do as and when I remember, but it does support output in different formats and could easily be run as a cron job or metrics exporter in the cluster.

I have started writing a Nova exporter to get the output of Nova into Prometheus so I can get alerts when I have outdated deployments, but it’s not finished yet. I’ll share here when I’ve had some time to finish it off.